In most SAP programs, test data is not treated as a strategic topic.

And yet it quietly determines how fast releases move, how stable automation remains, whether audits pass, and how confidently organizations migrate to S/4HANA.

Teams often blame failed test cycles on application defects or unstable integrations.

Look closer and the same root causes surface again and again: refreshes take too long, sensitive production data cannot be reused safely, regression packs break after every cycle, environments drift apart, and nobody clearly owns test data across the landscape.

This article examines the most common test data management challenges in SAP environments, why they persist even in mature organizations, and how leading teams address them in practice.

Why Test Data Management Is So Hard in SAP Landscapes

SAP landscapes are large, interconnected, and heavily regulated.

A single business process might span Finance, Procurement, Manufacturing, Logistics, and HR. Each step relies on tightly coupled master data, configuration, and historical records. When that web of relationships is copied incompletely or masked incorrectly, test results become unreliable.

The pressure has intensified in recent years. S/4HANA conversions introduce new data models. Agile delivery increases release frequency. Automation runs nightly. Cloud and hybrid landscapes multiply system variants. Regulators demand stricter data protection.

Test data management, which covers extraction, masking, subsetting, refresh, governance, and retirement of non-production datasets, has moved from operational nuisance to delivery-critical capability.

If you are earlier in the maturity curve, it helps to step back and understand how SAP teams normally approach test data across its full lifecycle, from creation and masking through refresh and retirement. We break that foundation down in our SAP Test Data Management guide.

7 Real Test Data Management Challenges in SAP (And How Teams Fix Them)

These are not theoretical problems.

They appear in ECC and S/4 landscapes, in brownfield conversions, greenfield builds, regulated industries, and agile delivery programs.

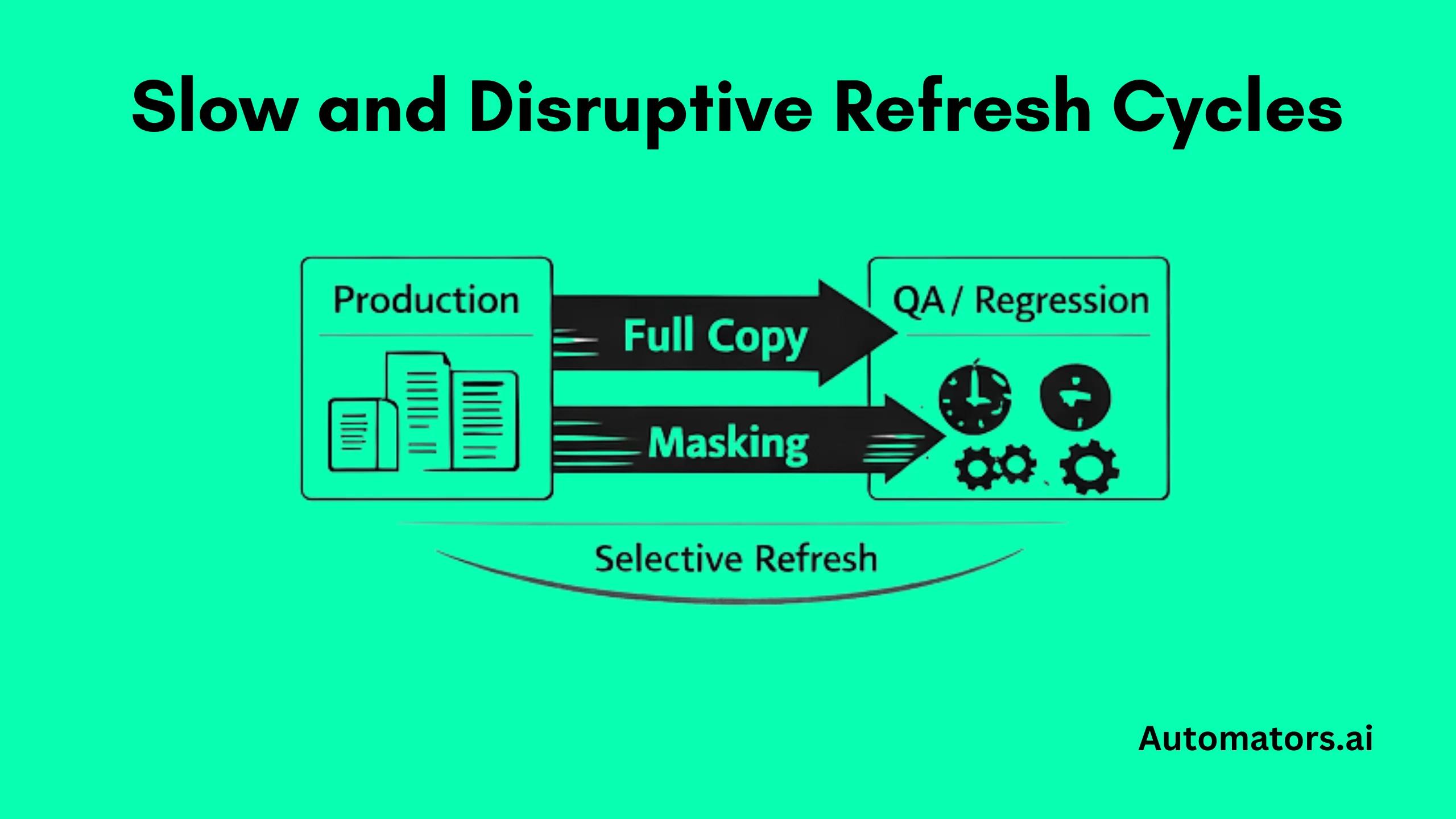

1) Slow and Disruptive Refresh Cycles

In large SAP estates, refreshing a QA or regression system often becomes a mini-cutover. Database copies run for days. Transports are frozen.

Masking jobs extends the downtime. After systems reopen, testers spend another week repairing broken scenarios and automation.

Business users lose confidence in test calendars because environments are constantly unavailable.

Why it keeps happening

Most programs default to full system copies because deciding what data is required feels risky. Masking is added after the copy, rather than built into the pipeline.

Automation datasets are not regenerated automatically, so every refresh destroys the very records regression depends on. Over time, refresh cycles grow longer rather than shorter.

Refresh bottlenecks are so common that many programs treat them as inevitable, even though they usually stem from design choices rather than technical limits. We analyze those failure patterns and recovery strategies in detail in SAP Test Data Refresh guide.

What mature teams change

High-performing programs stop refreshing everything by default.

They classify upcoming test scope first. A financial close rehearsal may genuinely require production-derived balances, but a pricing regression pack usually does not. From that analysis they design selective refreshes and incremental loads that move only the business entities needed for the next cycle.

Automation-critical datasets are recreated immediately after refresh through scripted rebuilds rather than patched manually.

How tools fit

Baseline SAP copy utilities or TDMS often remain part of the process for large refreshes. Landscape-orchestration tooling coordinates timing and dependencies. Test-data platforms handle subsetting, masking, and regeneration. Synthetic generators are added once scenarios need to be rebuilt repeatedly across cycles.

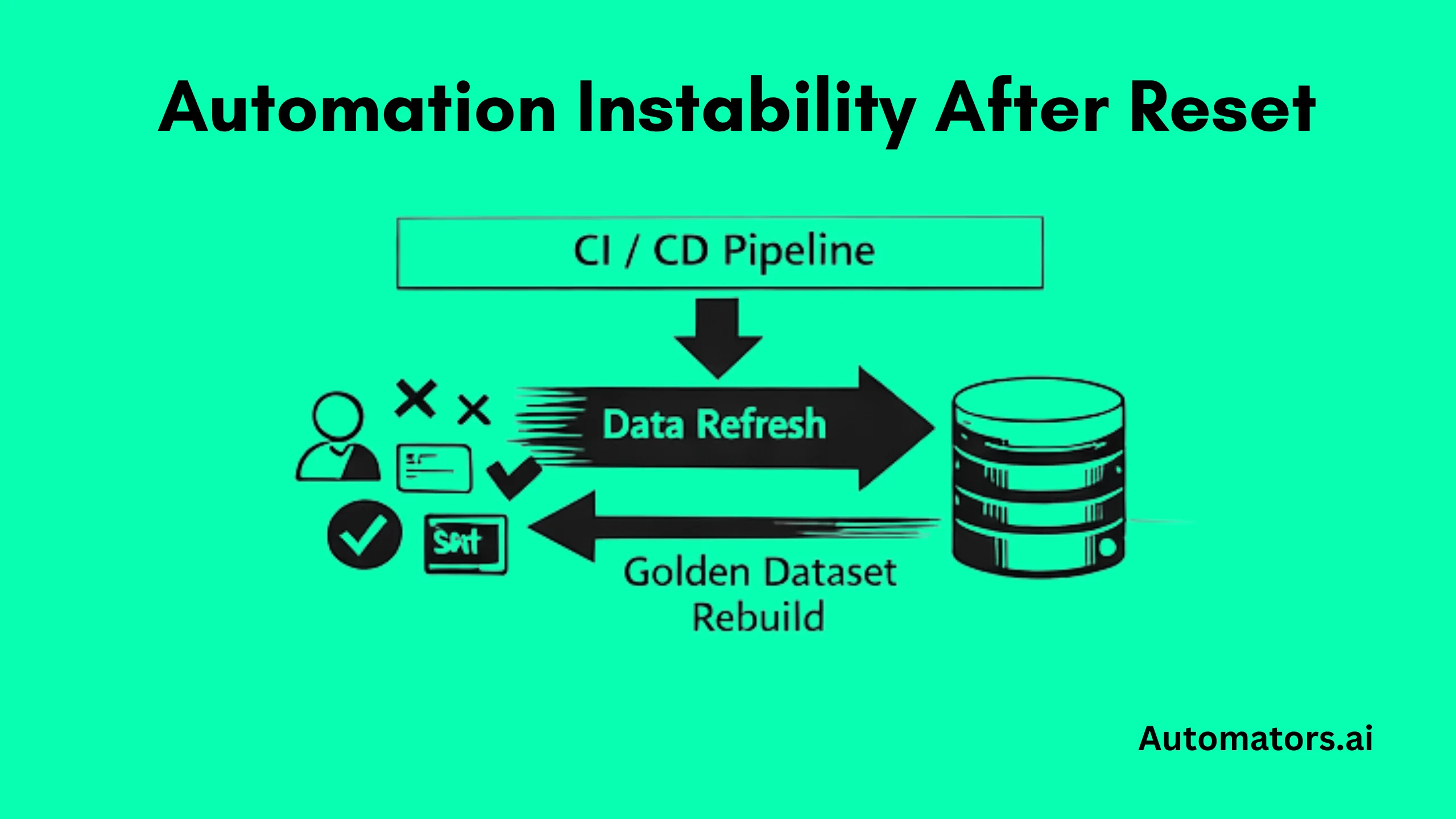

2) Automation Instability After Every Reset

Nightly regressions fail even when no transports have moved. Scripts suddenly complain about missing customers, archived invoices, or financial balances that no longer exist.

Engineers keep private spreadsheets of “safe” IDs or hard-code document numbers into test cases just to keep pipelines green. CI jobs become noisy, failures are ignored, and automation slowly loses credibility with delivery teams.

Why this shows up again and again

Automation is usually built on top of whatever data happens to be in the system at the time. Refreshes wipe key records. Business users adjust master data during UAT. Migration rehearsals overwrite carefully prepared scenarios.

Because no canonical datasets exist for automation, every environment reset destabilizes scripts. The testing framework gets blamed, even though the real culprit is volatile data underneath.

How strong programs respond

Mature teams define a small number of canonical datasets that automation depends on, often called “golden datasets.” These include specific business partners, materials, pricing conditions, open AR and AP items, and end-to-end document chains that represent critical flows such as order-to-cash or procure-to-pay.

Those datasets are not protected manually. They are rebuilt automatically after every refresh as part of environment setup. Test cases reference logical identifiers or scenario tags rather than physical document numbers wherever possible.

Automation packs are also explicitly mapped to the data they require, so the impact of a refresh becomes predictable instead of disruptive.

What usually supports this in practice

Synthetic data generators like DataMaker and test-data-management platforms provide regeneration logic. Automation frameworks with strong parameterization reduce reliance on fixed IDs. Orchestration layers trigger rebuild jobs before test execution begins.

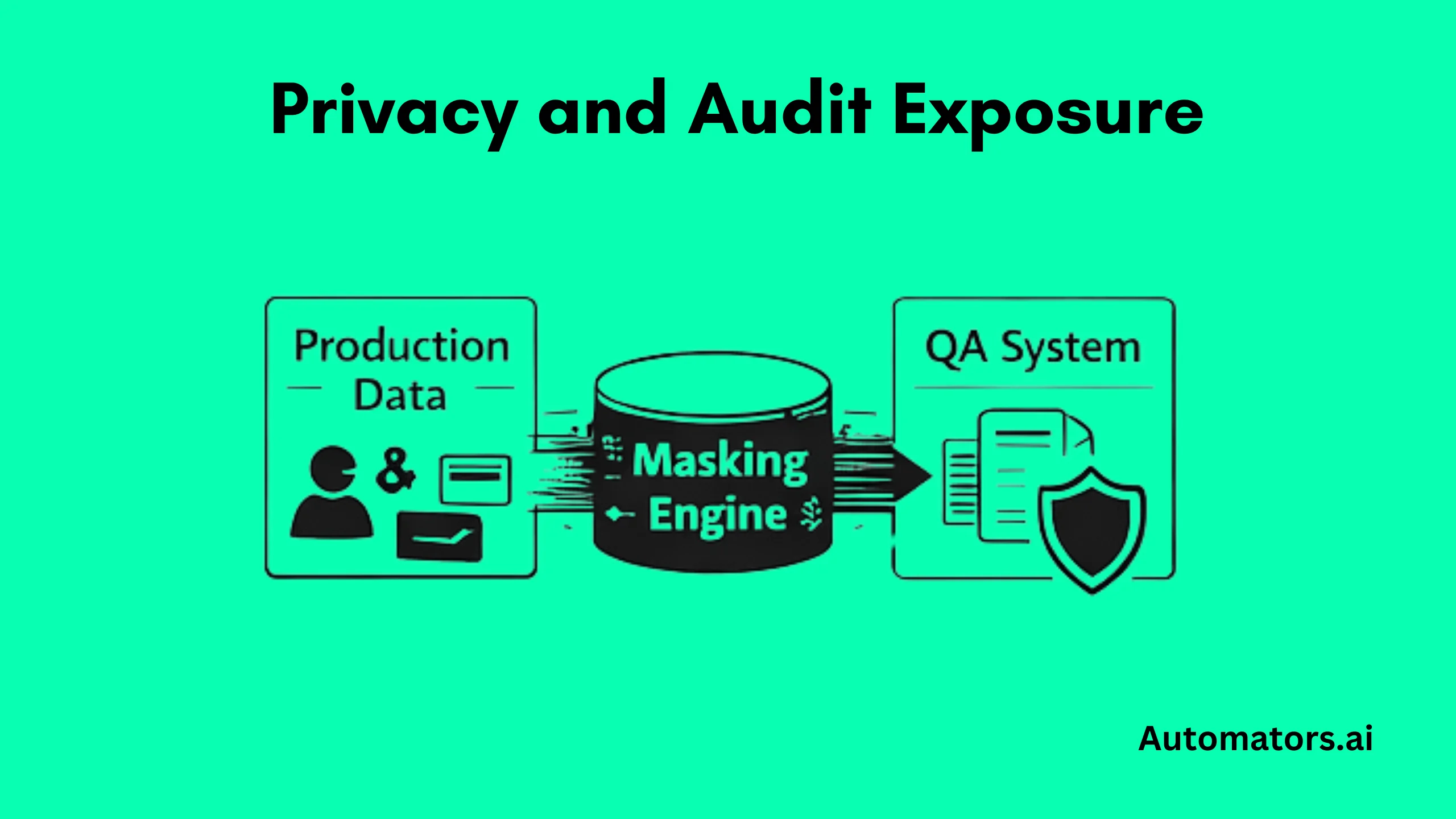

3) Privacy, Masking, and Audit Exposure

1QA systems end up containing real employee names, payroll details, bank accounts, and customer addresses. Compliance teams step in late, usually just before UAT or audit checkpoints.

Masking scripts are rushed through and break core business behavior. Payment runs fail because account formats changed. Tax calculations produce nonsense. Dunning and credit management stop working. UAT stalls while risk assessments are debated.

Why this keeps happening

Sensitive fields are often identified only at table level, not at process level. Masking is added after copies instead of designed into refresh pipelines. Compliance stakeholders are pulled in late, under delivery pressure.

As a result, formats required by downstream logic are destroyed during scrambling, and relationships between fields are lost.

How mature SAP programs fix this

Stronger programs design masking alongside business processes, not as a technical afterthought. They map which fields drive pricing, tax determination, payment media, credit checks, and statutory reporting.

Masking rules preserve formats, ranges, and cross-field dependencies rather than blindly randomizing values. Payroll numbers still look like payroll numbers. IBANs keep valid structures. Tax jurisdiction codes remain consistent.

Masking is embedded directly into refresh workflows, and every cycle produces audit evidence showing what was copied, how it was transformed, and which systems received it.

What usually supports this in practice

TDM platforms with transformation engines handle process-aware masking. SAP copy tooling supports embedded transformations. Governance systems and logging frameworks capture the audit trails regulators expect.

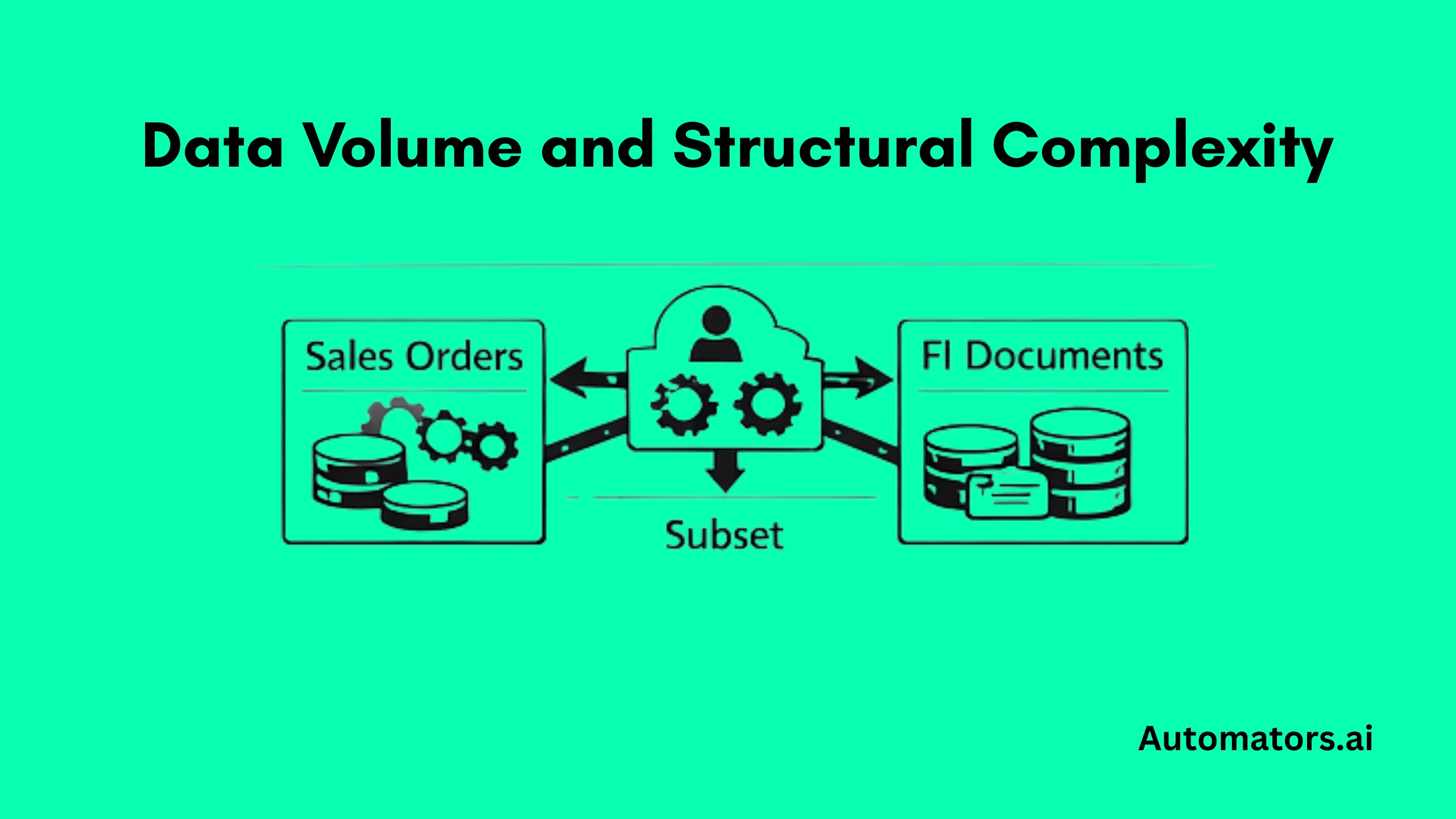

4) Data Volume and Structural Complexity

In SAP, a single customer or vendor is rarely just one record. It is tied to pricing conditions, credit segments, partner functions, deliveries, invoices, FI documents, and controlling postings.

When teams try to subset data naively, part of that chain disappears and transactions stop posting. A billing document fails because the pricing condition is missing. Settlement breaks because a controlling object was not carried over. The alternative is full system copies, which keep everything but drive up storage costs and stretch refresh windows into days.

Why this keeps happening

Most extraction logic is written around tables rather than business entities. Cross-module dependencies are poorly documented, especially in systems with years of custom enhancements, user exits, and industry add-ons.

Over time, each project adds new relationships that no one models centrally, so subsetting becomes brittle and risky.

How mature teams handle it

Instead of thinking in tables, strong programs subset around complete business objects: an order-to-cash chain from customer master through billing and FI, or a vendor invoice through settlement and payment run.

Those subsets are kept intentionally small and refreshed frequently. Synthetic data is layered on top to create rare scenarios, edge cases, or the transaction volumes needed for stress tests, so environments remain lean without sacrificing coverage.

What usually supports this in practice

Entity-aware TDM platforms model cross-object dependencies. ETL tools with lineage tracking help trace custom relationships. Synthetic data generators handle scale testing and uncommon scenarios.

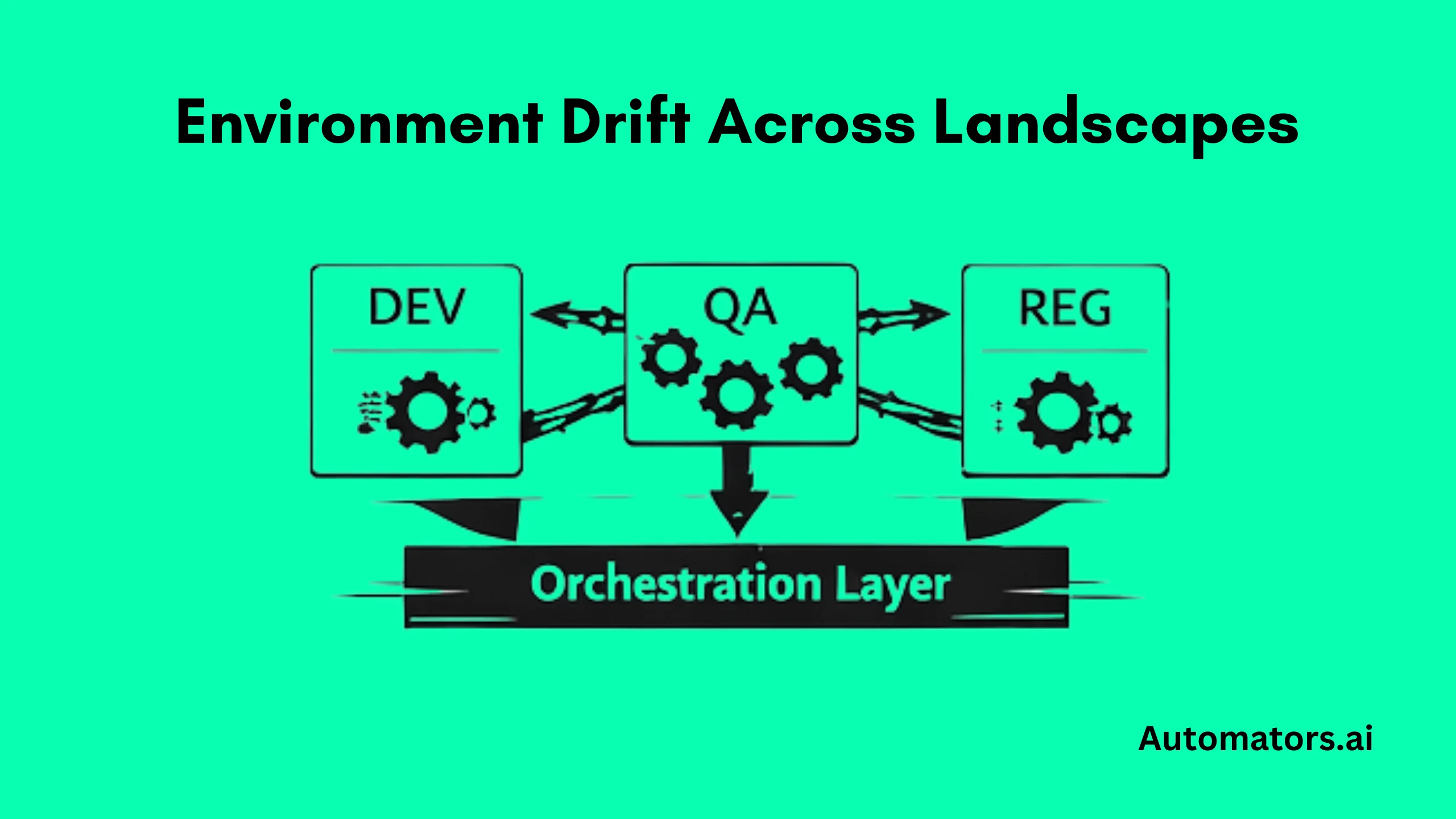

5) Environment Drift Across Landscapes

The same test case passes in DEV, fails in QA, and behaves differently again in regression. Pricing works in one system but not another. A business partner exists in QA but not in training. One environment was refreshed last week, another three months ago.

Defect triage turns into archaeology. Teams argue about whether a problem is code, configuration, or data.

Why this keeps happening

Refresh schedules differ by system. Emergency fixes are applied locally during UAT and never propagated. Data is patched manually to unblock testers. Over time, each landscape evolves independently.

Without standardized provisioning pipelines, environments drift until they no longer represent comparable states.

How strong programs respond

Mature programs centralize provisioning. Refresh pipelines are standardized across DEV, QA, regression, and training systems. Dataset rebuilds, masking jobs, and automation initialization steps are orchestrated consistently rather than executed ad hoc.

Manual fixes are logged deliberately and either promoted into transportable configuration or eliminated during the next refresh cycle, instead of lingering silently.

What usually supports this in practice

Landscape-management platforms coordinate system operations. CI workflows orchestrate refreshes and rebuilds. TDM platforms standardize provisioning logic and reduce one-off manual intervention.

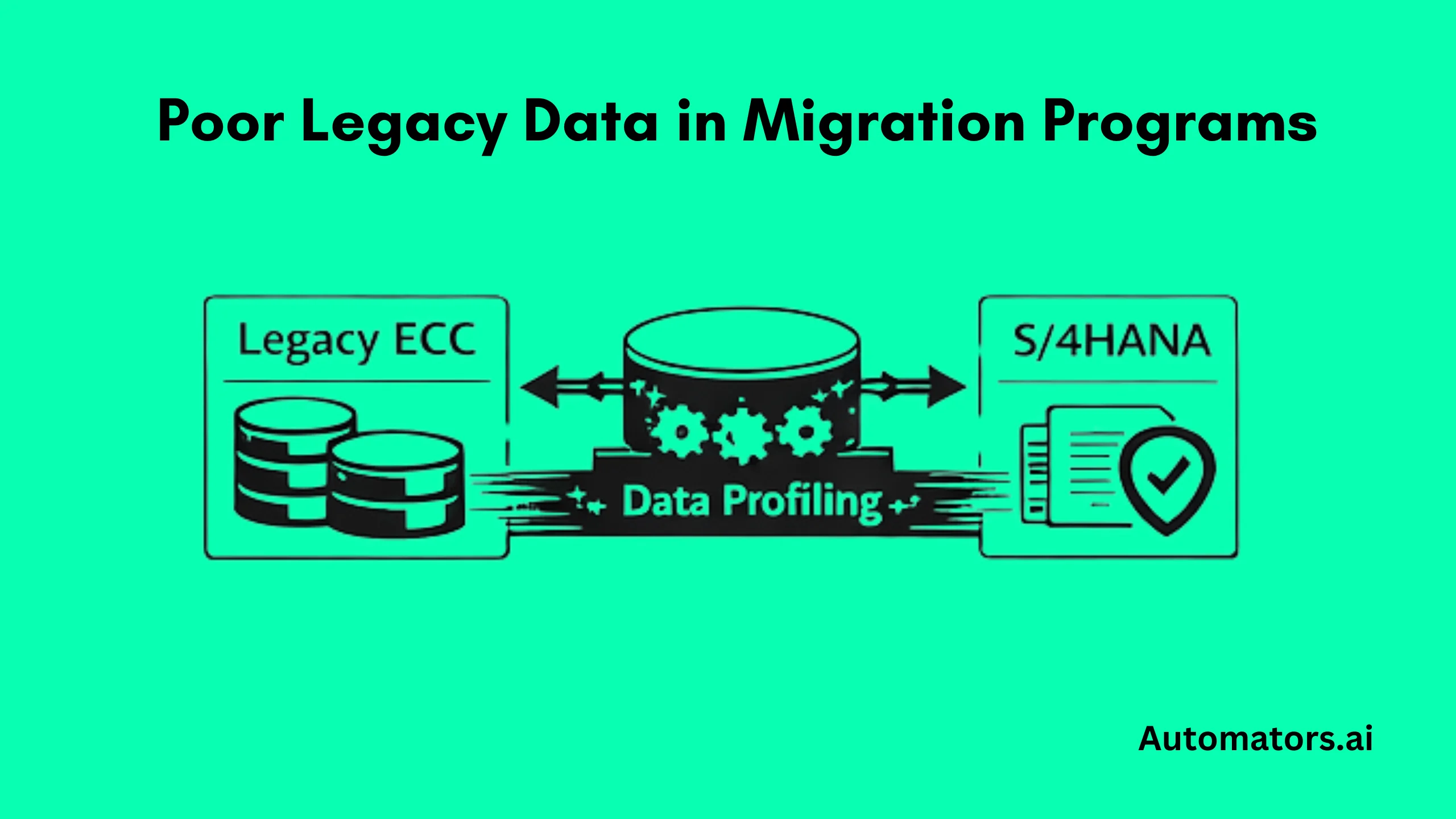

6) Poor Legacy Data in Migration Programs

During ECC to S/4 conversions, test cycles explode with thousands of “defects” that turn out not to be code issues at all. Vendors exist twice under different numbers. Materials lack required attributes for new S/4 processes. Hierarchies no longer line up with controlling structures. Opening balances and stock figures fail reconciliation.

Testing slows to a crawl while teams argue about whether failures come from the migration logic or from twenty years of accumulated data problems.

Why this keeps happening

Many programs postpone serious data cleansing until late cutover tracks. Test systems inherit raw legacy states, and migration loads are reused for everyday regression even though those datasets are unstable by design.

Profiling is shallow, and reconciliation is treated as a finance-only activity instead of a core testing discipline.

How strong programs deal with it

Mature programs profile legacy systems early, before large-scale testing begins. Duplicates, missing fields, obsolete records, and structural inconsistencies are identified and cleaned upstream.

Migration-specific datasets are maintained separately from steady-state regression packs, so day-to-day automation is not constantly destabilized by conversion experiments.

Finance and logistics teams work with curated reconciliation datasets that include opening balances, inventory stock, asset values, and open AR and AP items, allowing conversion correctness to be proven quickly instead of debated endlessly.

What usually supports this in practice

Migration cockpits handle transformation logic. Data-quality platforms support profiling and cleansing. Reconciliation tooling validates financial and logistical integrity. Synthetic generators provide clean baselines for non-migration testing cycles.

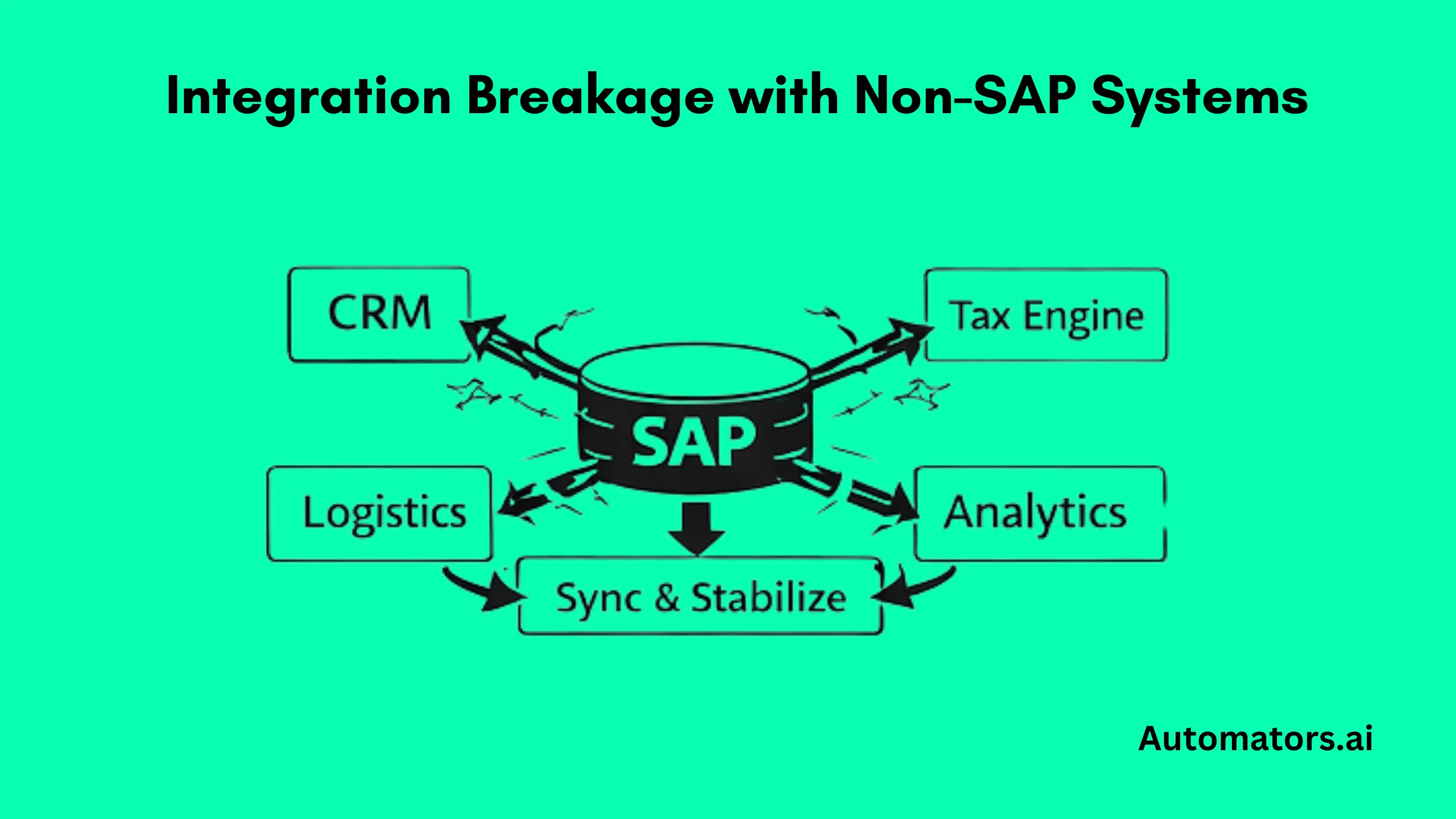

7) Integration Breakage With Non-SAP Systems

After an SAP refresh, downstream systems suddenly stop working. CRM records no longer match business partners. Tax engines reject invoices. Logistics providers cannot process deliveries. Analytics dashboards show nonsense.

Interfaces that worked yesterday collapse because reference data changed, identifiers were regenerated, or masked values no longer line up. Integration testing becomes unpredictable and slow.

Why this keeps happening

SAP environments are refreshed independently, while connected platforms are left untouched or rebuilt manually weeks later. Masking rules differ across systems. Reference data is synchronized inconsistently, if at all.

Each refresh introduces new mismatches that ripple through the landscape.

How mature programs respond

High-performing organizations treat SAP and its surrounding systems as a single test ecosystem.

Cross-system refresh orchestration is introduced so SAP resets trigger coordinated updates downstream. Reference data such as customers, tax codes, plants, and shipping points is synchronized deliberately.

Where full replication is impractical, teams stabilize interfaces using stubs or synthetic payloads that preserve formats and identifiers, keeping end-to-end flows testable even while systems evolve.

What usually supports this in practice

API-virtualization platforms simulate external responses. Integration-testing frameworks validate message flows. Orchestration layers coordinate resets. Synthetic data generators stabilize payloads and reference objects across systems.

A Practical Improvement Roadmap

Teams tackling SAP test data problems rarely fix everything at once. The most successful programs move in stages.

They start by mapping environments and refresh cycles. Next they identify which test packs depend on which datasets. Then they pilot subsetting or synthetic generation in a single domain, measure cycle-time reductions, and scale gradually.

Metrics matter. Mature teams track refresh duration, stabilization effort after copies, automation failure rates caused by data, and the percentage of tests that can run without manual setup.

Those numbers quickly reveal whether structural change is working.

Final Thoughts

SAP test data problems rarely come from a single broken tool or missed script. They emerge when refresh strategies, masking rules, automation needs, migration programs, and governance models evolve independently instead of being designed as one operating system.

Teams that make real progress stop treating test data as an afterthought. They decide which scenarios truly require production-derived data. They stabilize automation through regenerable datasets. They embed compliance into refresh pipelines instead of debating it during UAT. They orchestrate environments rather than letting them drift.

The organizations that master these disciplines shorten test cycles, reduce firefighting, and approach major SAP releases with far more confidence. Not because they work harder, but because their test data is engineered as deliberately as the systems it supports.