In SAP transformation programs, systems can appear stable while financial data is quietly misaligned. Transactions post successfully. Reports run. Users operate normally.

Yet during reconciliation, teams discover that General Ledger balances differ between systems, subledgers do not fully reconcile, or historical totals shift after migration.

Functional testing confirms that processes execute. Performance testing confirms that the system scales. Neither proves that migrated or transformed records remain numerically correct across ledgers, currencies, and fiscal periods.

SAP data integrity testing closes that gap.

This guide explains what must be validated, how to design a defensible validation framework, and how to execute it at enterprise scale.

What Data Integrity Testing Actually Checks

SAP data integrity testing verifies that financial and transactional data remains numerically accurate after system change. It compares source and target datasets to confirm completeness, consistency, and financial correctness.

In practice, validation operates at three distinct levels:

| Validation Level | What Is Being Verified | Typical Example in SAP |

| Balance Validation | Aggregate balances reconcile across reporting dimensions such as company code, ledger, fiscal year, period, and currency type | GL ending balance in ECC matches S/4HANA after migration within defined tolerance |

| Document Validation | Individual records are complete and unchanged at line-item level | No missing or duplicate accounting documents; posting date, amount, account assignment, and clearing status remain consistent |

| Cross-Module Validation | Financial impact of integrated processes remains numerically identical | SD billing document posts the same revenue and accounting entries in FI before and after migration |

Balance validation confirms totals. Document validation confirms completeness.

Cross-module validation confirms integrated financial behavior.

The objective is clear: prove that financial data remains trustworthy after migration, configuration change, or structural transformation.

When Structured Validation Is Necessary

Structured validation becomes critical when structural change introduces transformation risk.

Common triggers include:

- ECC to S/4HANA migration

- Ledger redesign or currency changes

- System carve-outs or mergers

- Major configuration changes

- Integration redesign

- Regulatory remediation

In these scenarios, millions of records may be migrated or recalculated. Even small mapping inconsistencies can accumulate into material balance differences.

At enterprise scale, manual reconciliation is not sustainable. Validation must be structured and repeatable.

Understanding what must be validated is only the starting point.

The real challenge is designing a reconciliation process that is defensible, repeatable, and scalable.

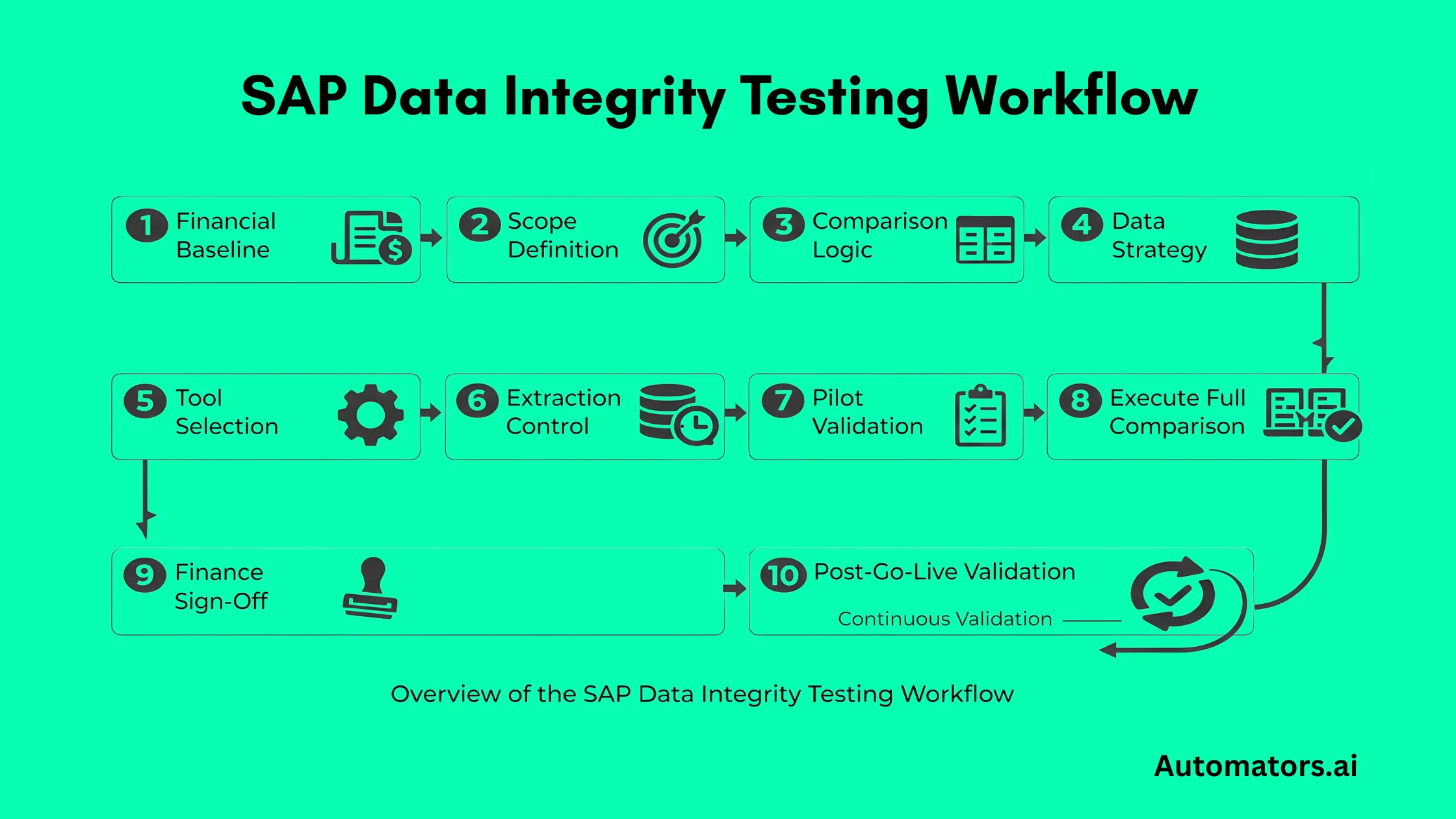

Below is a practical, end-to-end workflow that combines planning and execution into a single structured approach.

Step-by-Step Workflow for SAP Data Integrity Testing

Step 1: Anchor Validation to a Financial Baseline

Before extracting anything, identify the financial baseline that must remain stable.

For example:

- Trial balance by company code and ledger at period close

- Total open AP balance at migration cutover

- Inventory valuation per plant and valuation area

Pull these numbers directly from the source system using controlled reporting (for example, FAGLB03, FBL1N totals, or equivalent aggregated views depending on landscape).

Document:

- Reporting transaction used

- Selection parameters

- Fiscal period

- Currency type

These numbers become your control totals.

If your reconciliation cannot tie back to a documented financial baseline, it has no control anchor.

Step 2: Translate Financial Controls into Technical Extraction Scope

Now determine where the data resides technically.

In ECC, financial postings may exist across classic tables. In S/4HANA, financial postings are consolidated under the Universal Journal.

This means your extraction logic must align source and target data models before comparison.

Define clearly:

- Which tables or CDS views will be used in each system

- Whether reconciliation is balance-based or line-item-based

- Whether historical years are fully migrated or summarized

At this point, define validation depth:

Aggregate-level validation for prior fiscal years Line-item-level validation for current fiscal year and open items

Write this scope definition in technical terms. Do not rely only on business labels like “GL” or “AP.”

Step 3: Design Matching Logic Before Choosing a Tool

Comparison logic must be system-aware.

For example:

If document numbers are preserved, matching may be done on document number + line item + fiscal year.

If business partner harmonization changed vendor and customer representation, matching must account for that transformation.

If account structures were reorganized, balances may need to be aggregated to a common reporting hierarchy before comparison.

Define explicitly:

- Matching keys

- Aggregation rules

- Currency alignment logic

- Rounding tolerance (for example, absolute tolerance of 0.01 or percentage-based threshold depending on reporting requirement)

- Treatment of cutover timing differences

Only after this logic is defined does tool selection make sense.

Step 4: Define Data Strategy (Real, Masked, or Synthetic)

Final reconciliation must compare real migrated data between source and target systems. However, earlier validation cycles may rely on alternative datasets when full production-like loads are not yet available.

The table below summarizes the common data strategies used during different stages of validation.

| Data Type | Typical Use Case | Advantages | Limitations |

| Real Production-Derived Data | Final mock migrations, cutover rehearsal, pre-go-live validation | Highest accuracy and required for financial sign-off | Sensitive data exposure and large extraction volumes |

| Masked Production Snapshots | Lower environments with compliance restrictions | Preserves realistic data distribution while protecting sensitive data | Masking logic can alter certain attributes |

| Synthetic Data | Early reconciliation design, edge-case testing, volume simulations | Safe to generate, supports controlled scenarios and rare financial patterns | Cannot replace real source-to-target financial validation |

Synthetic data is particularly useful for validating reconciliation logic before full migration loads occur, such as testing currency rounding scenarios or complex clearing chains.

For scenario-specific datasets, tools such as DataMaker can generate controlled financial transactions and repeatable validation cases. These datasets help stabilize reconciliation rules early, while real migrated data remains the final proof of financial correctness.

Step 5: Select the Right Tooling for the Validation Design

Tooling should follow reconciliation design, not define it.

For limited scope validations, such as a single company code or small data volume, controlled SQL extraction and comparison scripts may be sufficient. SAP’s Migration Cockpit reconciliation checks can also serve as an initial validation layer during early S/4HANA migration cycles.

However, once scope expands to multiple company codes, multi-year history, or millions of documents across repeated mock cycles, manual approaches become difficult to maintain.

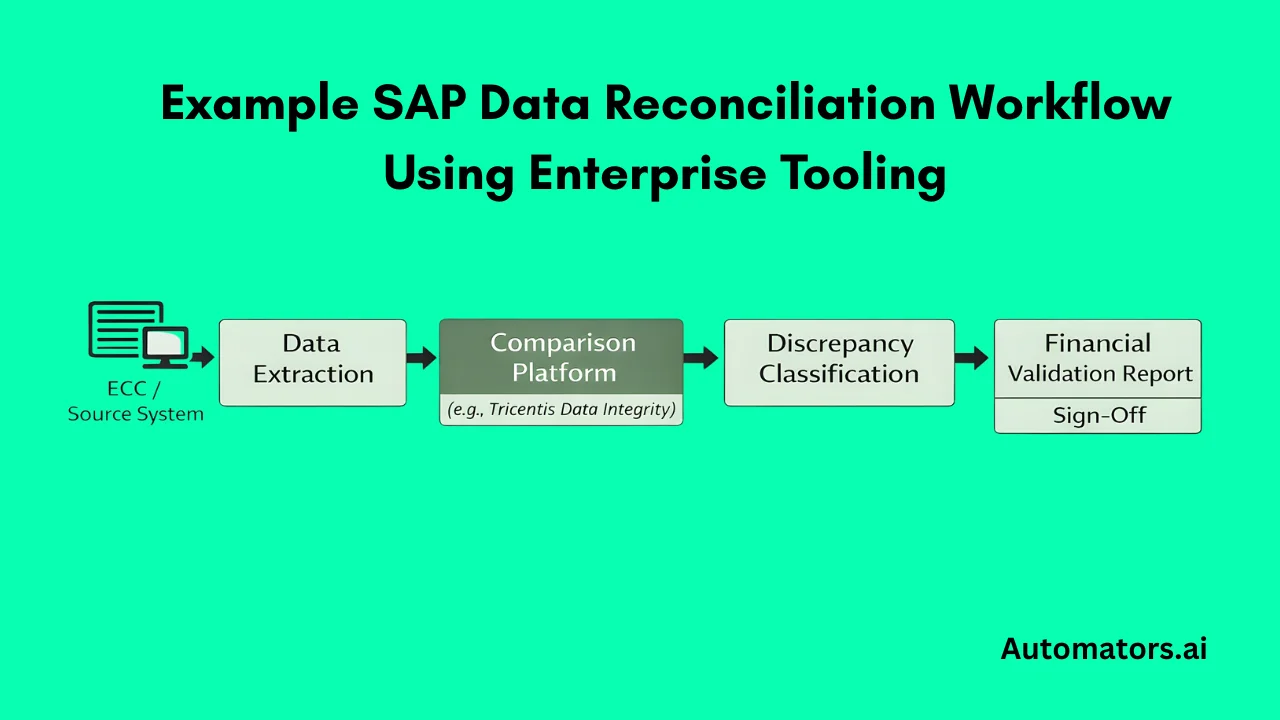

Enterprise reconciliation platforms provide scalable comparison and repeatable validation runs. In SAP landscapes, Tricentis Data Integrity supports:

- Controlled extraction from SAP systems

- Data model normalization between ECC and S/4HANA

- Configurable tolerance rules

- Large-volume comparison and discrepancy classification

- Repeatable execution across migration cycles

These platforms execute reconciliation logic at scale. The logic itself must still be defined by the program team.

Step 6: Control Extraction Timing and Freeze Conditions

Before running any comparison, ensure both source and target datasets represent the same logical cutoff point.

In migration scenarios, this often means coordinating extraction during mock cutover windows. If ECC data is extracted at one timestamp and S/4 data after additional postings occur, discrepancies will appear even if migration logic is correct.

Define clearly:

- The fiscal period being validated

- Whether postings are frozen during extraction

- Whether parked documents are included

- How subsequent postings are handled

For example, if validating period 12 balances, confirm no additional period 12 postings are processed in either system after extraction.

Without extraction discipline, reconciliation results become unreliable, and teams spend time investigating false differences.

Step 7: Run a Narrow Pilot Before Expanding Scope

Do not attempt full landscape validation immediately.

Start with a single high-impact slice, such as:

- GL balances for one company code and one ledger

- AP open items for a defined entity

- Inventory valuation for a specific plant

Execute the complete cycle: extract data, normalize structure if required, run comparison, review discrepancies, refine matching logic, and rerun.

The first comparison run will almost always surface discrepancies. Some will be genuine issues. Others will expose weaknesses in comparison logic.

The purpose of the pilot is to stabilize reconciliation rules before scaling. Once classification and tolerance behavior are stable in a narrow scope, expansion becomes controlled rather than chaotic.

Step 8: Scale Validation in Structured Waves

After the pilot logic is stable, expand gradually.

Increase scope by company code, fiscal year, or object type in controlled phases. Avoid opening all entities and all years simultaneously.

As discrepancies appear, classify them immediately based on root cause. A typical classification structure includes:

- Missing record in target

- Duplicate record in target

- Transformation or mapping issue

- Configuration-driven variance

- Currency translation difference

- Cutover timing mismatch

Each discrepancy should be traceable to:

- Identified root cause

- Assigned owner

- Remediation action

- Confirmation through rerun

Without disciplined classification, discrepancy reports become long lists with no resolution path.

Step 9: Formalize Finance Review and Sign-Off

Technical reconciliation alone does not eliminate financial risk. Validation must be reviewed and accepted in financial terms.

Before go-live, results should be presented in a format Finance can evaluate clearly. This typically includes:

- Control totals before and after migration

- Confirmation of document-level completeness where required

- Defined tolerance thresholds and justification

- A structured list of any remaining discrepancies

At this stage, a simple but strict KPI should apply:

Target zero material unresolved discrepancies for sign-off.

Minor rounding differences within approved tolerance may remain, but any discrepancy that impacts financial reporting or audit defensibility must be resolved before approval.

Finance must explicitly approve materiality thresholds and tolerance rules. Whether a rounding variance is acceptable, or whether a small balance difference can be carried forward, is a financial decision, not a technical one.

Formal sign-off confirms that reconciliation is not only technically complete, but financially acceptable.

Step 10: Extend the Framework Beyond Migration

A common mistake is treating data integrity testing as a migration-only task.

In reality, the same reconciliation structure you build for migration can become part of ongoing financial control.

After go-live, structured validation should be triggered whenever structural risk is introduced. This includes major transport releases, integration redesign, ledger configuration adjustments, or regulatory-driven reporting changes.

If reconciliation logic already exists, extending it into these change events requires far less effort than rebuilding controls reactively after discrepancies surface.

In mature SAP landscapes, data integrity validation becomes part of change governance. It shifts from emergency reconciliation to controlled assurance.

When to Call an Expert

Some SAP programs can design and execute reconciliation internally. This is often feasible in smaller environments with limited history and simple financial structures.

External expertise becomes valuable when reconciliation complexity increases, particularly in environments involving:

- Multiple ledgers or currencies

- Complex intercompany accounting

- Multi-year migration scope

- Repeated unexplained discrepancies during mock cycles

- Tight cutover timelines with limited remediation windows

In these situations, reconciliation design and automation configuration require specialized experience.

As a Tricentis partner, Automators provides enterprise SAP data integrity testing services using Tricentis Data Integrity alongside structured reconciliation methodology. This enables programs to design comparison logic correctly and execute large-scale validations across complex SAP landscapes.

The goal is not outsourcing responsibility, but reducing financial risk during transformation.

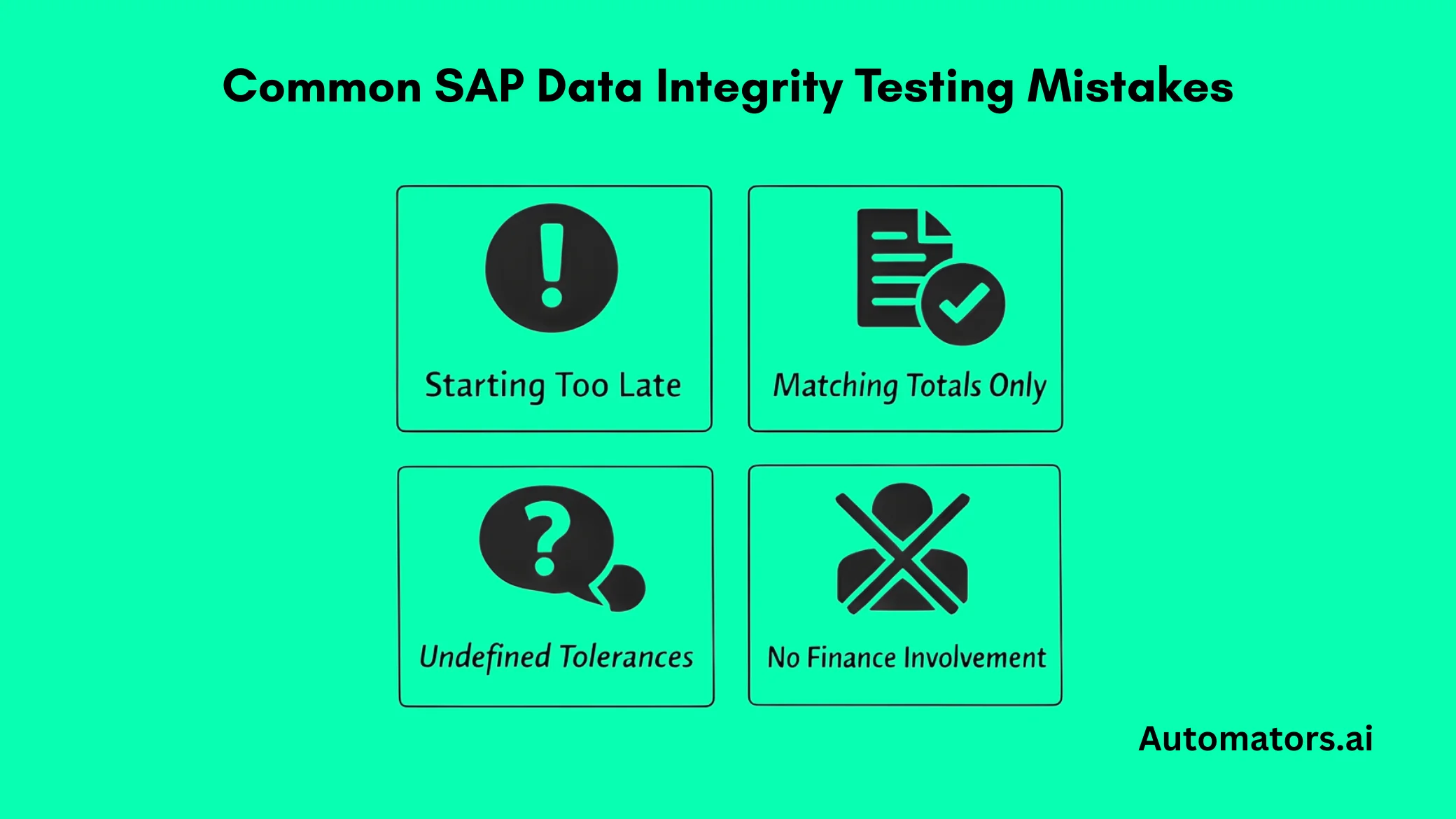

Common Mistakes That Undermine Reconciliation

Most reconciliation failures do not come from system limitations. They originate in planning and governance gaps.

One frequent issue is starting validation too late, often only weeks before go-live. At that stage, discrepancies become schedule threats rather than manageable findings.

Another is validating aggregate totals without verifying document completeness. Matching balances alone does not guarantee that no documents were lost, duplicated, or transformed incorrectly.

Undefined tolerance rules create unnecessary conflict. If rounding and currency handling are not agreed in advance, teams spend time debating acceptable differences instead of resolving actual errors.

Finally, excluding Finance from tolerance approval weakens the entire exercise. Technical reconciliation without financial ownership does not create assurance.

These mistakes are preventable. They are usually process failures, not system failures.

Final Perspective

SAP systems can function normally while financial inconsistencies remain invisible.

Functional testing confirms processes operate. Performance testing confirms the system scales. Data integrity testing confirms that financial results remain numerically correct.

In enterprise SAP programs, confidence must be demonstrated through structured validation, not assumed from system stability.

When reconciliation scope, matching logic, data strategy, tooling, and governance are defined clearly, data integrity testing becomes a control mechanism rather than a reactive troubleshooting exercise.

It is not an additional testing layer. It is financial risk management during change.