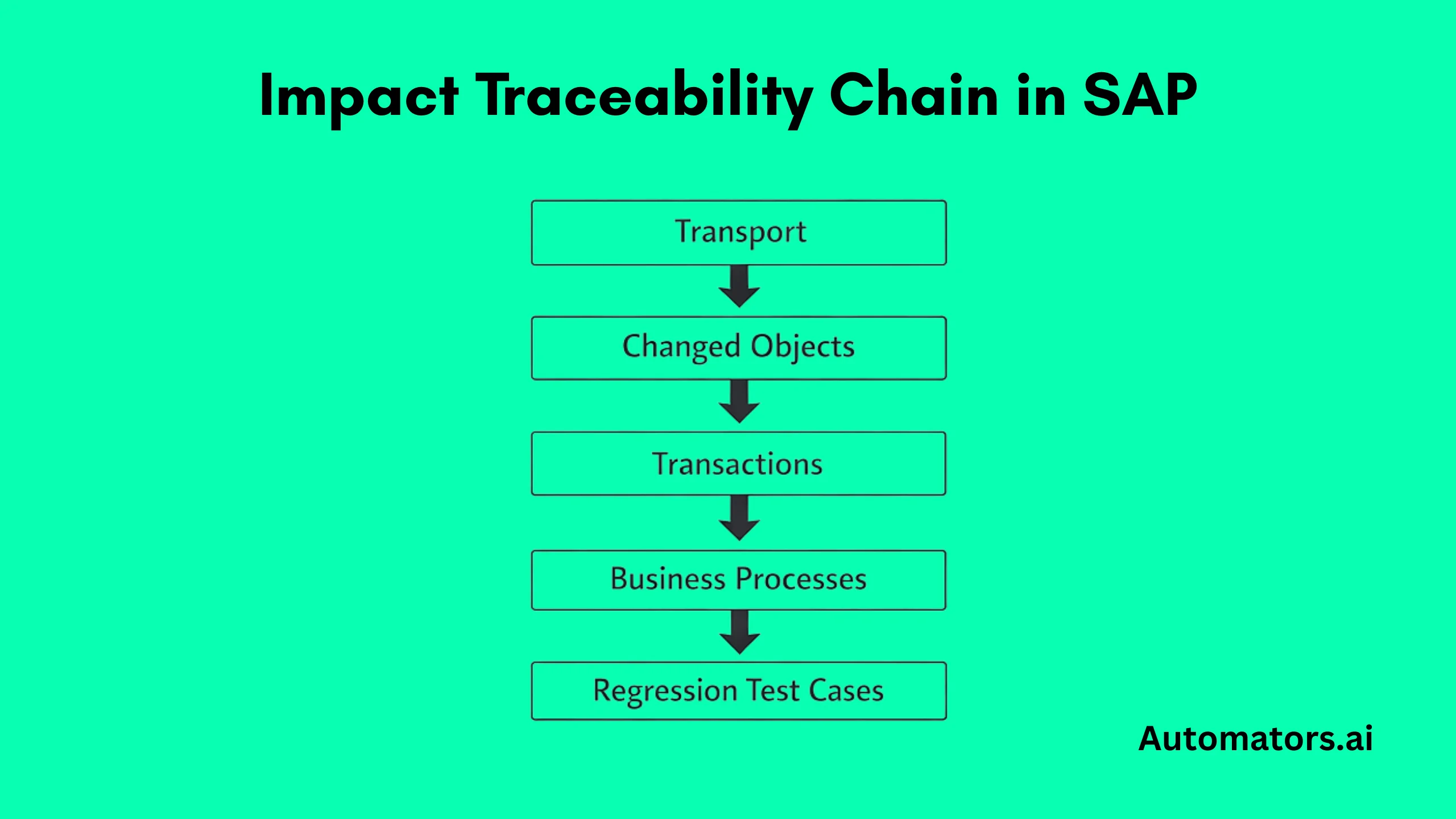

Change impact analysis in SAP is a process that identifies which parts of the system are affected by a specific change. When a transport, configuration update, or custom development is introduced, impact analysis determines which business processes and regression tests are exposed.

In practical terms, it answers one critical question: which tests actually need to run?

If impact analysis is not planned and implemented properly, teams either execute excessive regression tests or miss critical scenarios. Running too many tests increases release time and cost. Missing the right tests increases production risk. In complex SAP environments, neither outcome is acceptable.

Planning and implementing change impact analysis correctly ensures that the regression scope is defined based on evidence rather than assumptions. This article explains how to approach it in a structured way.

Is Your SAP Landscape Ready for Change Impact Analysis?

Before designing a process, evaluate whether your environment can support reliable impact analysis.

First, check traceability. When a transport moves into QA, can you trace the technical objects it modifies? Can those objects be linked to transactions and business processes? If that chain is unclear, the impact results will lack precision.

Second, review your regression test library. Are test cases mapped to specific transactions or business processes, or are they grouped broadly under modules such as “Finance” or “Order-to-Cash”? Without structured mapping, impact output cannot reliably narrow regression scope.

Third, assess visibility into custom developments. Many SAP systems contain years of enhancements. If dependencies are poorly documented or loosely governed, analysis tools may not capture the full impact.

Modern tools such as SAP Solution Manager’s Change Diagnostics or Tricentis LiveCompare can infer dependencies through static analysis and usage data. However, even AI-assisted inference depends on structured objects and traceable test coverage. Tools cannot compensate for missing governance.

If transports, objects, processes, and test cases are not connected in a disciplined way, impact analysis will generate technical output but limited decision value.

How to Plan Change Impact Analysis in SAP

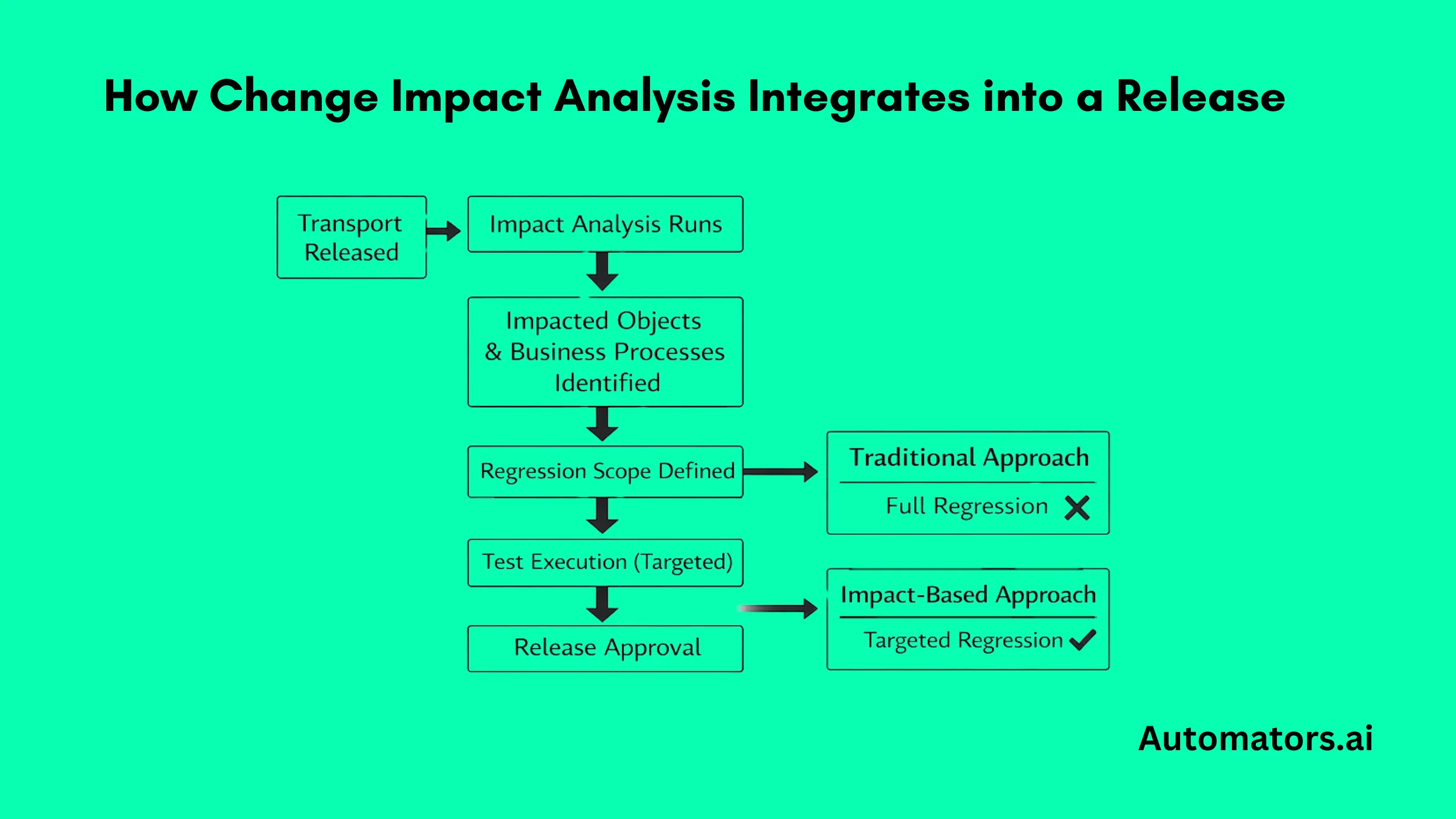

Once the foundation is stable, planning focuses on integration into release management.

Start with ownership. Define who runs the analysis, who reviews the results, and who approves regression scope adjustments. If these responsibilities are unclear, impact analysis becomes inconsistent.

Next, align it with transport management. In environments using SAP Solution Manager with ChaRM or Focused Build, impact analysis should be triggered before the regression scope is finalized. The results should be reviewed as part of test plan approval, not after execution has started.

Then define regression rules. For example:

- Will only impacted scenarios run?

- Will a minimum baseline always execute?

- Are certain high-risk processes included regardless of output?

These rules must be agreed upon in advance. Without predefined decision criteria, teams revert to full regression “to stay safe,” which defeats the purpose.

Finally, determine frequency. In high-change environments, impact analysis should run continuously as transports move into QA. In structured release cycles, it may align with predefined testing milestones.

Planning is complete only when impact results clearly influence regression decisions.

How to Make It Work in Real Releases

Once the process is defined, the next step is making sure it actually works during live release cycles. In practice, this comes down to a few specific actions.

1. Run Impact Analysis Before Regression Planning Is Locked

Impact analysis must be executed early enough to influence the testing scope. If the regression scope is already approved and test preparation has started, analysis becomes informational rather than operational.

In environments using SAP Solution Manager with ChaRM or Focused Build, impact output should be reviewed during test plan approval. In setups using Tricentis LiveCompare, analysis should trigger automatically when transports reach QA.

The key principle is timing. Impact must inform decisions, not follow them.

2. Translate Technical Output Into Business Test Scope

Impact tools identify changed objects and dependencies. Testing teams work with scenarios and business processes. The gap between these two layers must be bridged.

Consider a transport modifying pricing configuration. Impact analysis identifies affected condition tables and related enhancements. Those technical findings must translate into impacted scenarios such as:

- Sales order validation (VA01)

- Billing execution (VF01)

- Revenue postings in FI

Regression scope is then adjusted accordingly. If technical results are not mapped to executable test cases, the value of impact analysis is lost.

3. Align Automation and Manual Regression

In automated environments, impacted scripts should be dynamically selected based on analysis results. In manual environments, regression plans must be updated before execution begins.

If automation still runs full regression despite the impact on output, efficiency gains are not realized. The same applies to manual testing when teams revert to broad coverage out of caution.

Impact analysis works only when regression execution follows its logic.

4. Ensure Test Data Is Ready for Impacted Scenarios

A narrowed regression scope is effective only if impacted scenarios can be executed immediately. Delays in test data preparation reduce the benefit of impact-driven testing.

If specific financial structures, customer records, or pricing combinations are required for impacted flows, those datasets must be available in advance. Structured test data management supports this transition and prevents execution bottlenecks.

Common Mistakes That Reduce the Value of Your Effort

Several recurring patterns limit the effectiveness of change impact analysis.

1. Treating It as a Reporting Exercise

Running impact analysis and storing the output without adjusting the regression scope does not improve release control. If testing effort remains unchanged, the process is not influencing decisions.

Impact analysis must shape what is tested.

2. Weak Traceability Between Objects and Test Cases

If test cases are not clearly linked to transactions and processes, impact results cannot reliably narrow the scope. In such cases, teams either over-test to stay safe or risk missing dependencies.

Traceability is not optional; it is the foundation of meaningful impact analysis.

3. Underestimating Custom Developments

Many SAP landscapes contain extensive custom code and enhancements. Undocumented dependencies reduce the completeness of analysis results.

Without visibility into custom objects, impact output may overlook indirect effects across modules.

4. Ignoring Release Governance

Impact analysis should be part of release approval, not an optional review. When ownership and review steps are unclear, analysis becomes inconsistent across releases.

Governance ensures repeatability.

5. Overlooking Test Data Readiness

Even when the impact scope is correctly defined, regression execution may stall due to missing or non-compliant datasets.

Impact-driven testing assumes execution readiness. Without structured test data support, efficiency gains are limited.

When Structured Support Makes Sense

In large SAP programs, the challenge is often alignment rather than awareness. Coordinating transport workflows, traceability models, test libraries, and impact tooling across teams and modules requires structured effort.

External expertise can help define traceability models, integrate impact analysis into release cycles, and ensure regression scope decisions are consistent and evidence-based.

At Automators.ai, change impact analysis is delivered as part of structured SAP testing services. The focus is on embedding impact results into real release decisions, not just generating technical output.

Where needed, this approach is supported by controlled test data generation through DataMaker to ensure impacted scenarios can be executed efficiently and safely.

The objective is not to replace internal teams, but to establish a repeatable and sustainable process.

Final Thoughts

Change impact analysis in SAP is not about testing less. It is about testing correctly.

When planned properly and integrated into release governance, it reduces unnecessary regression effort while improving confidence in system stability.

If your organization is using impact analysis but the regression scope remains broad and unchanged, it may be worth reviewing how the process is designed and implemented.

Effective change control depends not only on analysis, but on disciplined execution.